Tech Things: EU AI Act, Biden Admin Order, Google Gemini

Note: I’ve heard from more than a few friends who really enjoy Matt Levine’s Money Stuff and wished that there was some version of this for tech news — some combination of relevant, explainable to a lay-man, and (most importantly) funny and fun to read. I’ve been writing a lot more recently and figured I might try to take a stab at filling the niche. Hoping to write a digest/roundup each week, though we’ll see how long that lasts… in the meantime, thanks for reading!

EU AI Act

Back in April, 2021, the wise folks running the EU Commission drafted a bill called the Artificial Intelligence Act. This is a full year and a half before the release of ChatGPT, and only 3 months after I left Google to start SOOT. So in the grand scheme of AI things, very prescient!

The aim of the act was to provide a regulatory and legal framework for AI regulation in the EU. From the text of the act:

the Commission puts forward the proposed regulatory framework on Artificial Intelligence with the following specific objectives:

·ensure that AI systems placed on the Union market and used are safe and respect existing law on fundamental rights and Union values;

·ensure legal certainty to facilitate investment and innovation in AI;

·enhance governance and effective enforcement of existing law on fundamental rights and safety requirements applicable to AI systems;

·facilitate the development of a single market for lawful, safe and trustworthy AI applications and prevent market fragmentation.

To achieve those objectives, this proposal presents a balanced and proportionate horizontal regulatory approach to AI that is limited to the minimum necessary requirements to address the risks and problems linked to AI, without unduly constraining or hindering technological development or otherwise disproportionately increasing the cost of placing AI solutions on the market.Lofty goals, but I have a few problems.

First, the act defines AI as

(a)Machine learning approaches, including supervised, unsupervised and reinforcement learning, using a wide variety of methods including deep learning;

(b)Logic- and knowledge-based approaches, including knowledge representation, inductive (logic) programming, knowledge bases, inference and deductive engines, (symbolic) reasoning and expert systems;

(c)Statistical approaches, Bayesian estimation, search and optimization methods.I’m not a lawyer, but this seems ridiculously broad?

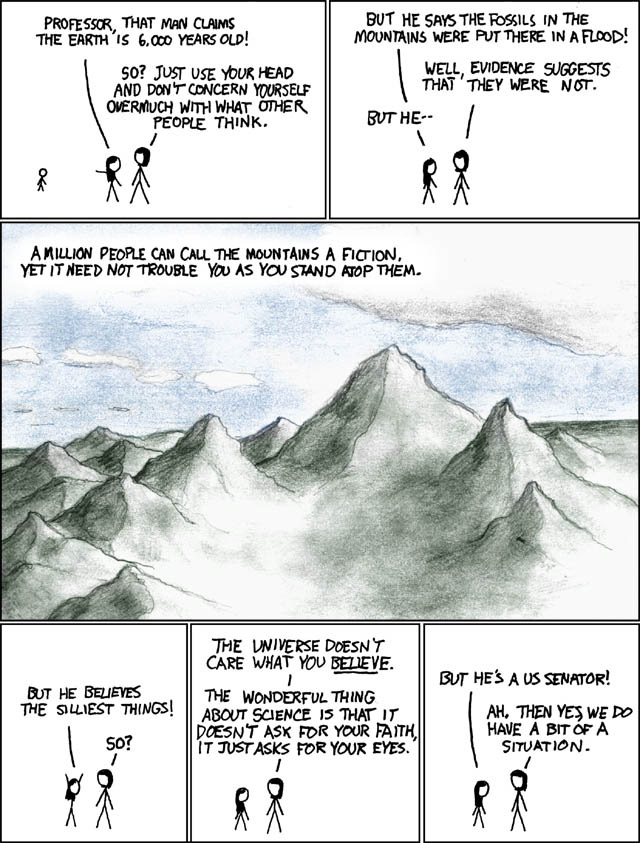

A straightforward reading of the text of this act suggests it applies to everything from ChatGPT down to linear regression. And, look, I tend to lean towards the ‘AI is going to kill us all’ side of things. But I still want to be able to use deep learning in my cars and banks and vacuums.

I’m tempted to give these guys some slack because defining ‘AI’ was always going to be hard — industry experts, folks who have been in the space for years, are still debating whether it’s just matrix multiplication or something deeper. But then I remember, o wait, Andrew Ng isn’t writing legislation! If Andrew Ng is like, “actually linear regression is AI” we can all go “haha Andrew you’re so funny” and go on with our lives. But this is a lot more of a problem when it’s coming from a regulator.

Second, the act has a very long list of uses of AI that are explicitly prohibited. The list includes:

“using subliminal techniques beyond a person’s consciousness in order to materially distort a person’s behavior”

“social score leading to…detrimental or unfavourable treatment of certain natural persons or whole groups thereof”

“real-time remote biometric identification systems”

Again, not a lawyer, definitely not an EU expert. But…uh…isn’t the first thing the entire online ads industry? And isn’t the second thing the entire credit analysis industry?

I’m going to mildly break my NDA here1: when I was last at Google, techniques that would definitely qualify as AI under this act were used basically everywhere. Especially in ads serving. I can’t imagine that that’s decreased in the last few years. And though I have never worked at a credit agency, surely they are doing, like, basic statistical analysis? I think a lot of people have an ax to grind about the negative externalities of both of these things. And on any day ending in y, you can find me griping about how much I hate Experian, or how much I think everyone should use AdBlock.

But also, probably having accurate credit scores and free access to Google Search is a net good for society!

Unsurprisingly, the tech industry writ large has argued that this act goes way overboard; OpenAI has outright threatened to shut off access in the EU. But maybe more surprising, at least some EU member states also agree that the act was too broad. From the Financial Times:

Addressing an audience in Toulouse on Monday, the French president attacked the new Artificial Intelligence Act agreed last Friday, saying: “We can decide to regulate much faster and much stronger than our major competitors. But we will regulate things that we will no longer produce or invent. This is never a good idea.”

Macron said he was concerned that the new law means the EU will enforce the world’s toughest regime on so-called foundational models, the technology that underpins generative AI products such as OpenAI’s ChatGPT, which can churn out quantities of humanlike words, images and code in seconds.

He cited the case of Mistral, the eight-month-old Paris-based AI start up that has been valued at €2bn in a blockbuster funding round, as an example of “French genius” that was an early leader in building AI models.

But Macron added: “When I look at France, it is probably the first country in terms of artificial intelligence in continental Europe. We are neck and neck with the British. They will not have this regulation on foundational models. But above all, we are all very far behind the Chinese and the Americans.”

Macron’s comments may presage a new battle over the final terms of the AI Act, which still needs to be ratified by member states over the coming weeks. France, alongside Germany and Italy, are in early discussions about seeking alterations or preventing the law from being passed.In retrospect, it is absolutely not surprising that France would come out swinging against this act — Mistral is an unexpected darling of the AI world, and several other French AI startups have followed in it’s footsteps by earning staggering valuations through massive funding rounds. I can’t help but wonder if the EU be so quick to regulate AI if the major AI companies were based in Europe.

An astute reader may notice I’ve left out that third bullet, the one about biometric ID systems. This is actually the only point in the article text that I agree with, but unfortunately the actual act guts itself by carving out an exception for law enforcement: “In a duly justified situation of urgency, the use of the system may be commenced without an authorisation and the authorisation may be requested only during or after the use.” Great, thanks, I guess.

I suppose I should be happier about all of this. I am very pro slowing down our current rate of AI research — again, AI may kill us all, etc. etc. But I just don’t think Europe is the place where AGI is going to appear. Instead, we’re going to just get worse products (and maybe have a harder time applying for loans) for the interim period before AI does go rogue. And any country that is serious about being in the AI race (France) is clearly going to ignore or minimally enforce these laws.

As an aside. The worst thing about trying to do unbiased research on the EU AI Act is having to deal with constant reminders of the failures of the last time the EU tried to regulate the tech sector. I’m speaking of course of the now-ubiquitous cookie banners that seem to pervade every single website, including (of course!) the site that hosts the actual text of the AI Act!

Maybe I’d be more inclined to believe that this time the regulation will be helpful actually — if I could just see past the blinding rage of having to reject more cookies.

Biden AI Executive Order

Earlier I said “I can’t help but wonder if the EU be so quick to regulate AI if the major AI companies were based in Europe.”

Turns out, the answer is maybe yes! Because even though virtually every major AI player is located in the US — including OpenAI, Anthropic, Google, Meta, NVIDIA, Microsoft — back in October the Biden Admin put out an executive order that states in part:

My Administration places the highest urgency on governing the development and use of AI safely and responsibly, and is therefore advancing a coordinated, Federal Government-wide approach to doing so. The rapid speed at which AI capabilities are advancing compels the United States to lead in this moment for the sake of our security, economy, and society.The EO is mostly toothless, though. It goes on to describe a whole bunch of committees that will write up a whole bunch of proposals that will likely never actually be used for anything beyond helping a politician seem like they are “doing something” in the current moment.

Except for this bit.

The Secretary of Commerce, in consultation with the Secretary of State, the Secretary of Defense, the Secretary of Energy, and the Director of National Intelligence, shall define, and thereafter update as needed on a regular basis, the set of technical conditions for models and computing clusters that would be subject to the reporting requirements of subsection 4.2(a) of this section. Until such technical conditions are defined, the Secretary shall require compliance with these reporting requirements for:

(i) any model that was trained using a quantity of computing power greater than 10^26 integer or floating-point operations, or using primarily biological sequence data and using a quantity of computing power greater than 10^23 integer or floating-point operations; and

(ii) any computing cluster that has a set of machines physically co-located in a single datacenter, transitively connected by data center networking of over 100 Gbit/s, and having a theoretical maximum computing capacity of 10^20 integer or floating-point operations per second for training AI.As far as I’m aware, no models currently reach these limits. GPT4 was trained with maybe 5x less compute than 1026.

Still.

These are numbers that are within reach — AI computational power doubles pretty quickly these days. And though the Executive Order only carries transparency requirements for now, the fact that there are actual numbers put to paper means that someone in the Biden admin is serious about this. Actual companies may have actual reporting requirements — not strictly unheard of, but sure feels like a shot across the bow.

One other quick note: a surprisingly large part of the EO was about using AI for the development of biohazards — lethal plagues, neurotoxins, etc. I’m…pretty sure most people aren’t working on this? But also, why did the Biden admin feel they needed to call that out specifically in so much depth? What do they know???

Google Gemini

[Note: previously used to work at Google]

Imagine you’re Sundar Pichai. You’re the CEO of Google. Your company was, for years, seen as the dominant player in AI — you even went on stage a few years prior and announced that Google is an ‘AI first company’ way before that was a thing. Google was pumping out tons of papers, it’s products were getting better every day, and life was good. You could get a bunch of equity and a bunch of money and mostly just steer the ship.

And then in the last year a smaller, nimbler startup seems to have used your technology to build a product before you. And that product is ground breaking and revolutionary and, most importantly, is eating at your company’s moat.

What do you do?

I think most people would likely say something like: “get a team to launch a competing product ASAP”. Maybe a more in depth answer would add something like: “integrate that competing product into everything Google has, leveraging the insane distribution of Google products to make it ubiquitous and get first mover advantage globally”. I like to think I might have said something like that.

That said, I am not the CEO of Google, so maybe there are strategic considerations that I am missing. What Google actually did was spend about a year hyping up the release of an internal model called Gemini that was meant to be the GPT4 competitor. Then they released a flashy video, people thought the flashy video was too flashy, maybe unethically flashy, and now Google is in the news for, uh, lying to people. All according to plan? From the Verge:

Google just announced Gemini, its most powerful suite of AI models yet, and the company has already been accused of lying about its performance.

...

Google aired an impressive “what the quack” hands-on video during its announcement earlier this week, and columnist Parmy Olson says it seemed remarkably capable in the video — perhaps too capable.

The six-minute video shows off Gemini’s multimodal capabilities (spoken conversational prompts combined with image recognition, for example). Gemini seemingly recognizes images quickly — even for connect-the-dots pictures — responds within seconds, and tracks a wad of paper in a cup and ball game in real-time. Sure, humans can do all of that, but this is an AI able to recognize and predict what will happen next.

But click the video description on YouTube, and Google has an important disclaimer:

“For the purposes of this demo, latency has been reduced, and Gemini outputs have been shortened for brevity.”

That’s what Olson takes umbrage with. According to her Bloomberg piece, Google admitted when asked for comment that the video demo didn’t happen in real time with spoken prompts but instead used still image frames from raw footage and then wrote out text prompts to which Gemini to responded. “That’s quite different from what Google seemed to be suggesting: that a person could have a smooth voice conversation with Gemini as it watched and responded in real-time to the world around it,” Olson writes.People were right to be skeptical! As soon as I saw the video I messaged a friend something pretty similar!

Love being right about things.

Look I actually feel kinda bad for Google here. They were pretty clearly not trying to lie to people — they literally released a ‘how it’s made’ blog post describing…well…how the video was made. People who got up in arms about Google ‘lying’ saw the video from Google first, didn’t do any additional research, and then felt betrayed when they learned the ‘truth’ (from Google).

Still, from a business strategy perspective, this is a really bad look. Google needed this thing to go off without a hitch, but a) it didn’t and b) even aside from the marketing snafu they didn’t actually release the model. The model that they released publicly on Bard only has a smaller version of Gemini that is more comparable to GPT3 than GPT4, the latter of which, by the way, came out in March!

So the current strategy is:

Announce a model 9 months after your competition;

Show only slight improvements on benchmarks;

Don’t actually release the thing for people to use.

I love Google and still firmly believe in their ability to do good work — see Waymo — but this is a real headscratcher. For now, my $20 AI ChatBot subscription still goes to OpenAI.

For any lawyers or future court transcripts reading this, this is a joke, I am not breaking any NDAs.